High Performance

Database Consulting

- Build, repair and optimize complex databases

- Work directly with a top-tier database expert

Database Intensive Websites

Websites and apps that interface with large databases cannot be developed the same way as regular websites which are mostly static or have limited content management. The ability of the website to send a query to the back-end, have it processed expeditiously, and then have every cell fully rendered without it feeling slow to the user is a challenge.

My team has considerable experience in developing database intensive websites and apps, having brought multiple projects from the planning stages to full-featured and optimized websites, some interfacing to millions on rows of data on the back-end.

We begin with proper database design, optimized for quick retrieval. You never want to send large amounts of data across the internet and then filter it on the web browser – that will always feel sluggish. You want all filtering to be done on the back-end using database code (typically stored procedures), and only the end result returned, typically one page at a time.

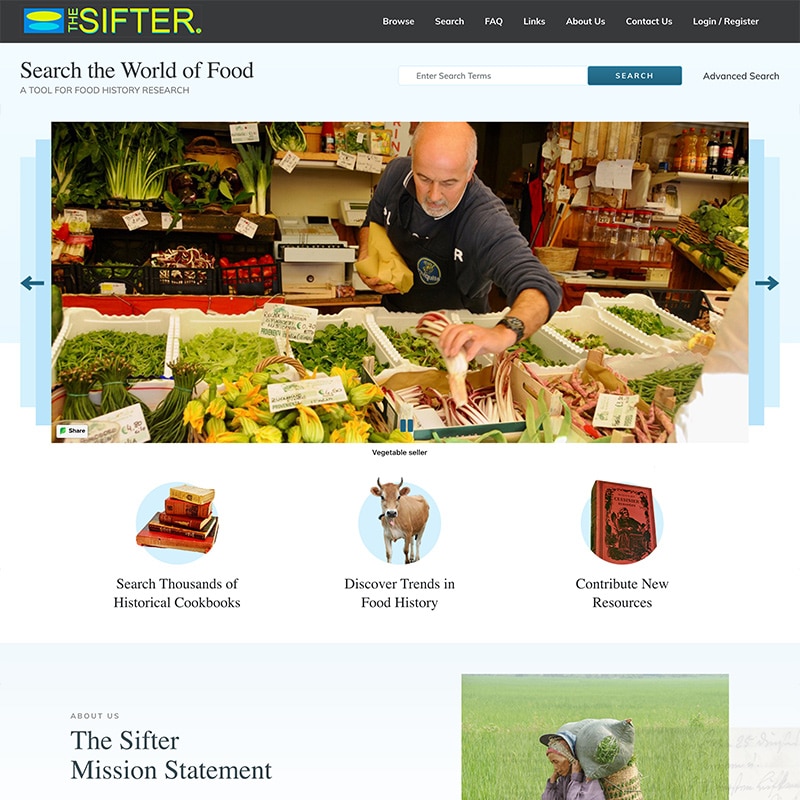

Our most recent project, The Sifter, was developed using meta-data tables in such a way that it can easily be re-purposed for any SQL Server database. It has a fast and very full-featured web interface for browsing, editing, filtering, searching and exporting. We are currently looking into creating a SaaS product for customers who want an instant web-based front-end to an existing database, as well as expanding it to work with other database systems.

For this type of project, I typically do all of the database work and design the system, then create a team of front-end developers (e.g. ASP.Net), graphic designers, and QA people to build out the user interface. If you need to build or repair a database-intensive website or app, please get in touch. I’d love to help out!

LEXINGTON MA

Work Samples

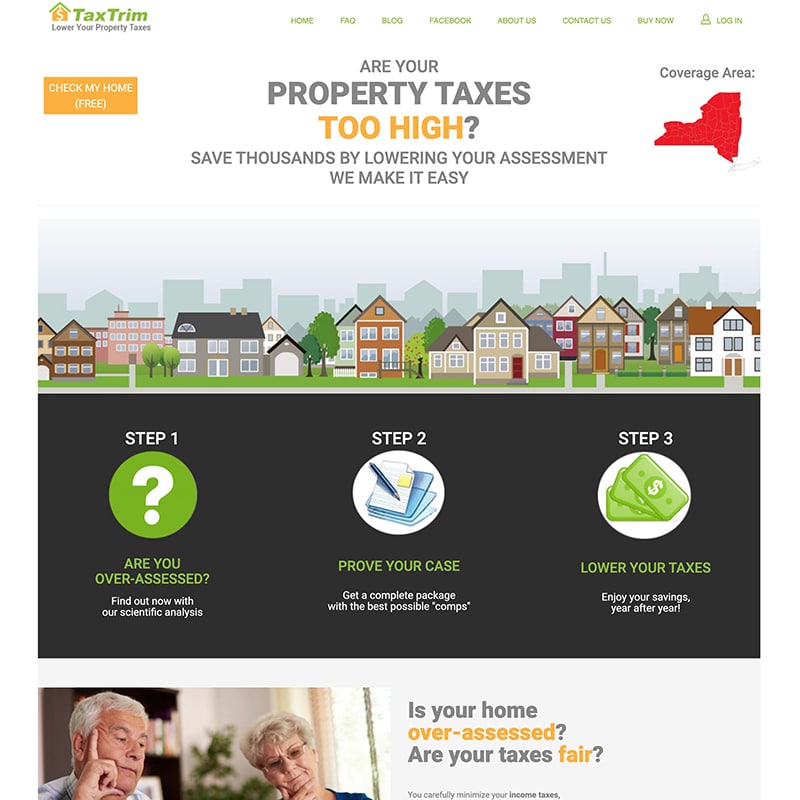

Developed over 12 years, TaxTrim is an online toolkit for determining if your home is over-assessed compared to its neighbors, and for building an optimized property tax grievance to take to your assessor. It is backed by detailed information on millions on homes, and its customers commonly save 20-30% on their yearly tax payments. It has a SQL Server back-end and an ASP.Net front-end.

The Sifter provides a Wikipedia-like interface for research on international food history. It has detailed information on thousands of historical cookbooks, and any user can add to and edit the data. It provides full-featured search capabilities, filtering, browsing, exporting, and fast response times. To accommodate public access, it has robust mechanisms for reversing bad edits if needed. It is completely based on meta-data and may be turned into a general-purpose database front-end service in the near future. It has a SQL Server back-end and an ASP.Net front-end.